Slides from my Measure the Future presentation at Computers in Libraries 2018.

Blog

I am pleased and proud to announce, after 2 years of development, that the Measure the Future project is entering the next stage of its development, and is now officially in public Beta.

This means several things:

First

We have worked very hard to identify a ton of bugs from our Alpha version. In the time since our Alpha, we’ve completely changed our hardware (from the Intel Edison platform to the Raspberry Pi 3) which meant porting all of our code over, re-writing installers, and more. The new hardware is faster, has more space for data storage, and is overall just enormously more powerful than our Alpha sensor hardware was. I’m very proud of the work that went into the Beta hardware units, and can’t wait to get them into production.

Second

Our desire to always be open in our processes, code, and ethos means that our Beta is public. This release marks the first publicly available build, install, and configuration instructions for the project. If you or your library wants to play around with, or even implement our tool to better understand space usage in your library, you are welcome to do so. Also, all the code we have written for the project is available, and if you would like to contribute, you may do so via Github.

Third

We will be continuing our work with our Alpha partners (Meridian Public Library, SUNY-Potsdam, and the New York Public Library) to move them to our Beta sensors. Measure the Future is also adding additional locations for installs with a new round of 4 Beta partner libraries. These additional locations (announcement soon on who those are) will give us even more feedback and will work with us to determine the best way to present this new type of library usage data. We will be answering the questions that our Beta partners want answered, so if you have questions you want our help with, please let us know. We have room for a couple more libraries in our Beta testing, and would love to work with you.

The big development goal for our Beta period is the move from local visualization of activity and attention in library spaces to a cloud-based portal that will allow for much richer visualizations. We are dedicated to making this move from local-to-cloud as privacy-focused and security-aware as possible, and so we will be taking great care in how we move forward.

We are extremely excited to be continuing devleopment of Measure the Future, and look forward to building this tool for librarians, by librarians.

It’s been too long since a public update made its way out for Measure the Future. In light of the rapidly approaching ALA Annual 2016, here’s the current state of the Project….

The Good News

Enormous amounts of code has been written, and we have the most complicated parts nailed down. When we started on this Project, the idea of using microcomputers like the Edison to do the sort of computer vision (CV) analysis that we were asking was still pretty ambitious. We’ve worked steadily on getting the CV coding on the Edison done, and it’s a testament to our Scout developer, Clinton, that it’s as capable as it is.

The other major piece of the Project, the Mothership, was also challenging, although in a different way. Working through the design of the user interfaces and how we can make the process as easy as possible for users turned out to be more difficult than we’d imagined (isn’t it always?). Things like setting up new Scouts, how libraries will interact with the graphs and visualizations we’re making, and most importantly how we ensure that our security model is rock solid are really difficult problems, and our Mothership developer, Andromeda, has been amazing at seeing problems before they bite us and maneuvering to fix them before they are a problem.

But if I specified good news, you know there’s some bad news hiding behind it.

The Bad News

We’re delayed.

We have working pieces of the project, but we don’t have the whole just yet.

Despite enormous amounts of work, Measure the Future is behind schedule. The goal of the project, from the very beginning back in 2014, was to launch at ALA Annual 2016. While we are tantalizingly close, we are definitely not ready for public launch, and with ALA Annual 2016 now just a couple of weeks away, it seemed like the necessary time to admit to ourselves and the public that we’re going to miss that launch window.

As noted in the “Good News” section, the individual pieces are in place. However, before these are useful for libraries, the connection between them has to be bulletproof. We are working to make the setup and connection as automatic and robust as we possibly can, and as it turns out, networking is hard. Really hard. No, even harder than that.

We’re struggling with making this integration between the two sides automatic and bulletproof. There’s no doubt that in order for the Project to be useful to libraries, it has to be as easy as possible to implement, and we are far from easy at this point. We still have work to do.

I’m very unhappy that we’re missing our hoped-for ALA Annual 2016 launch date. There’s a lot of benefit of launching at Annual, and I’m very disappointed that we’re not going to hit that goal. But I’d rather not hit the date then release a product to the library world that isn’t usable by everyone.

Conclusion

Measure the Future is still launching, and doing so in 2016. But we’re going to take a little longer, test a little more thoroughly, get the hardware into the hands of our Alpha testers, and ensure that when we do release, it’s more than ready for libraries to use. We’ve also been able to make a deal with a Beta partner that will really test what we’re able to do, and we’re really excited about the possibilities on that front. This extra time also gives us an opportunity for additional partnerships and planning for getting the tool out to libraries. More news and announcements on that front in a month or so.

We’re going to make sure we give the library, museums, and other non-profits of the world a tool that reveals the invisible activity taking place in their spaces. And we’re going to do it this year. Jason will be attending ALA Annual in Orlando, and he’ll be talking about Measure the Future every chance he gets. We don’t have a booth (a booth without the product in hand seemed presumptive) but it’s easy to get in touch with Jason if you want to ask questions about the project. If you have questions, feel free to throw them his way.

We’re rounding the bend on the last bits of development on Measure the Future before we do Alpha installs. Lots of details to get to, and tons of work yet to do…but the goal is in sight and the things we’re solving for are mostly known. We’re still aiming hard at launching at the ALA Annual Conference in June, and barring unforeseen problems we’re gonna hit that date.

One of the things we’re currently working on is the install of the software for the Scouts and the Mothership onto the respective hardware. Andromeda Yelton wrote a post about her side of that world (the Mothership) and how important it is to Measure the Future to make the installs as easy as we possibly can. From her post:

Well! I now have an alpha version of the Measure the Future mothership. And I want it to be available to the community, and installable by people who aren’t too scared of a command line, but aren’t necessarily developers. So I’m going to measure the present, too: how long does it take me to install a mothership from scratch — in my case, on its deployment hardware, an Intel Edison?

tl;dr 87 minutes.

Is this good enough? No.

For those of you that haven’t seen it, here’s the video (no audio, unfortunately) that we played at the booth at ALA Midwinter a couple of months ago that has demo footage of the Computer Vision that’s going on under the hood.

We’re very excited about getting this project into your hands, and we’re working hard to make it as easy as we can. Keep watching for more info!

Thus far in the development process for Measure the Future I’ve been working to ensure that I understood the need and was heading down a path that librarians found valuable. I did this via a survey given to both of my Alpha partners as well as linked in Library Journal, and after sorting through the dozens of responses I had a much clearer picture of what the community saw as valuable, and what maybe could wait for the version 2 of the project.

The next step was proof of concept for the hardware, which I put together and demoed at the ALA Annual conference in San Francisco in July. That demo went very well, with yet more awesome feedback and renewed interest from libraryland to push us forward. The hardware demos were great, and people seemed to like the progress we were making.

The next step was proof of concept for the hardware, which I put together and demoed at the ALA Annual conference in San Francisco in July. That demo went very well, with yet more awesome feedback and renewed interest from libraryland to push us forward. The hardware demos were great, and people seemed to like the progress we were making.

Now is the time in the project that I’ve been working towards since ALA Annual. I knew that the project needed a particular skill set to move forward, and that I probably needed more than one person to make it happen. I needed someone with a solid understanding of computer vision, someone who’s worked with the sorts of problems inherent with using cameras as a data source. And I needed someone who had a great head for turning numbers into meaning, that could look at the data from the sensors and make those numbers work for the libraries in question. I know how everything fits together, but I learned a long time ago that it’s faster to find experts than it is to try to learn everything that I might need to accomplish a project. So I did just that…found two experts that I’m beyond thrilled to work with.

The first is Clinton Freeman, a software developer from Cairns, Australia. Clinton was introduced to me by the amazing Daniel Flood of The Edge in Queensland, Australia. If you aren’t aware of the things they are doing at The Edge, they (like much of Australian librarianship) are way out in front of the future of the profession, making it happen. Clinton worked with them on a couple of projects, and has a history of working with computer vision to do really interesting things…the more Daniel told me about him, the more I knew he was the right guy for the job. I contacted him and laid out the problems we are trying to solve, and how I see it coming together, and in short order he was sold enough to join the team. Clinton understands the library world, and is going to be working on testing and improving my initial hardware work, the computer vision analysis, and API development for the project.

The first is Clinton Freeman, a software developer from Cairns, Australia. Clinton was introduced to me by the amazing Daniel Flood of The Edge in Queensland, Australia. If you aren’t aware of the things they are doing at The Edge, they (like much of Australian librarianship) are way out in front of the future of the profession, making it happen. Clinton worked with them on a couple of projects, and has a history of working with computer vision to do really interesting things…the more Daniel told me about him, the more I knew he was the right guy for the job. I contacted him and laid out the problems we are trying to solve, and how I see it coming together, and in short order he was sold enough to join the team. Clinton understands the library world, and is going to be working on testing and improving my initial hardware work, the computer vision analysis, and API development for the project.

The second new member of the team is someone who I can almost literally say needs no introduction, at least to the U.S. cadre of online librarians. I needed someone that could take the raw data coming off the sensors and write elegant algorithms to identify patterns and derive areas of interest from the flood of numbers…and do so with a keen eye on what librarians need to know (and more importantly, how not to overwhelm them with information). An amazing developer that groks libraries, is a math wizard, and can do front end web dev with the best of them? That’s a description custom-made for Andromeda Yelton if ever I saw one, and I’m beyond thrilled that she’s agreed to work with Clinton and I on the development of the project.

So this takes my team up to 6 total: myself, my alpha partner libraries represented by Gretchen Caserotti and Jenica Rogers, my amazing hardware advisor from Sparkfun Electronics Jeff Branson, and my development team in Clinton and Andromeda. The development team is in the process of setting development milestones now, in prep for a sprint from now until ALA Midwinter in Boston, where I hope to have the next round of demo hardware with some UI and UX to show off. Our timeline has always been targeting ALA Annual 2016 in Orlando to have the project ready for libraries to use, and I feel more confident than ever that we are going to do just that.

Expect some more reports as we set specific goals for the next few months, as there’s nothing like public expectation to make one hit deadlines. Here we go!

I realized a few weeks ago that I haven’t said nearly enough about the technology and plans for Measure the Future. Mostly I haven’t because I’m in crazy ramp-up mode, trying to get technology sorted, read All The Things about computer vision and OpenCV and SimpleCV, get some input from my Alpha testers, and generally keep the fires stoked and burning on the project. If I expect the library community to be excited about the work I’m doing, I need to get some of the things out of my head where you can all see them and, hopefully, help make them better.

The thing that I’ve gotten the most comments and emails about is the degree to which Measure the Future is “creepy.” There is both and implicit and explicit expectation of privacy in information seeking in a library, and when someone says they are thinking about putting cameras in and watching patron behavior…well, I totally see why some people would characterize that as creepy.

So here’s why what I am planning isn’t creepy. At least, I don’t think so.

What I want to do is measure movement of people through buildings, as a first step towards better understanding how the library building is used. There are dozens of ways this can be done, and among the ones that I’ve had suggested to me or asked about in the last month include:

- Infared sensors

- Lasers

- Ultrasound sensors

- Wifi skimming

- iBeacons

I rule out the first three because they don’t give enough data. You need multiple sensors to get things like direction of movement, and they all have serious trouble dealing with people moving in groups. I ruled out the last two because they ignore huge segments of the population that are integral to the library, only counting people who happen to have certain technology on their person as they move around. I also think that gathering data by passively skimming info from cell phones is inherently identifiable. Both of these last methodologies have the capacity to capture information that is unique to the person, and I want to avoid that can of worms. A core tenet of the system I’m building is Do Not Know Who You Are Counting.

The method that I am going to test first is machine vision…the use of cameras and algorithms to count people as they move through the frame of the camera. WAIT JUST A MINUTE, I hear you screaming. DID YOU JUST SAY YOU WERE GOING TO BE USING CAMERAS ALL OVER THE LIBRARY? Yep, I did. And here’s why doing so doesn’t harm privacy: the cameras aren’t going to be taking pictures in any way that you are probably thinking about “taking pictures.”

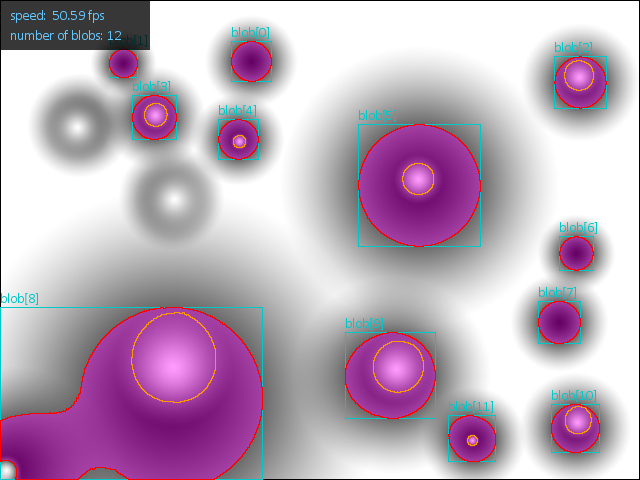

A Quick Primer on Computer Vision

Computer Vision is just what it sounds like…a computer using a camera as an input device and being capable of parsing the contents of said image into inputs. The examples that people have likely seen on procedural crime dramas of machine vision are not what we will be doing, however. No facial recognition, no body-heat tracking, no reading-license-plates-from-orbit (that’s reserved for the NSA). What we are focusing on is a feature of computer vision called “blob detection.” The computer watches a space, and ignores the static background, focusing instead only on the moving pixels in the image. By tracking moving, contiguous sets of pixels, you can count people, tell which direction and how quickly they are moving, and get information about how long they stay in an area. In theory, you can look at the size of the “blob” and interpolate from it whether it is one or many people.

The thing you can’t do from blob detection is identify who the blob in question actually is. In fact, the pictures that are analyzed aren’t even saved. They are examined by the computer vision system, blobs are counted and otherwise noted in a database, and then the photograph is gone and the system moves on to the next one. By storing only these incremented counts, along with a few bits of data like directionality and speed, we can derive a huge amount of information about behavior in the library without resorting to the identification of the individual, and without any lingering data trail for misuse. Photos of individuals are not saved, and they can’t be retrieved for later examination, nor will they ever be saved through our setup.

This is how I plan to gather important and useful data while maintaining the anonymity of the individuals in question. Measure the Future is committed to this as a grounding principle, and our code and hardware will always be open so that others can ensure that we are treating the data in question properly, safely, and responsibly.

Data structure

As a result of these concepts, I think that the structure of the data will be something like:

- Time/Date Stamp

- Location of Camera

- Number of People

- Direction of movement

- Speed of movement

Each of these data points will be captured by each individual camera that is “watching” an area. I envision libraries putting cameras in any area where they are interested in recording patron activity, such as the entry doors, meeting room entrances, in common areas, and in the stacks/new book displays. These stats would be saved, and available locally for the library to view via a browser-based interface in the style of Google Analytics, or to query via database calls or an API. The length of time that the stats are saved would be up to the individual library. And it would be up to the individual library whether or not to share the data with other libraries. The data will not be available to the Measure the Future project directly. It will be entirely in the control of the library that has implemented the system.

I won’t promise this for the 1.0 release, but one of my goals is also for the data to be encrypted while stored on the device and in transit to the local interface server.

Hardware

I don’t have nearly all the answers just yet, but I can wave in the direction of what I’d like to use. Another of the goals of the project is to use as much open hardware as possible in putting together the system. This ensures that the project can be replicated without issue, anywhere that you can source the hardware components…there is no “lock in” with proprietary hardware that has to be upgraded or fixed only by a vendor. It also will allow individual libraries and librarians to experiment freely with the systems, hopefully improving them and sharing said improvements with the community of users.

The equipment used will be Raspberry Pi based, and each camera unit will contain a camera + a Raspberry Pi that will act as the local brain for the computer vision. My plan is for all of these sensors to talk to a central statistics server that will act as the hub for collection and reporting of stats to the librarians. You will be able to have many sensors for every server (how many? still working on that…). The server will also likely be Raspberry Pi based, and my preference is for the sensors and the server to talk to each other through a private network, either via Xbee or standard wifi, but (this is important) not connected to the existing network of the library in question. It doesn’t need it, getting networking permission in some libraries is crazy difficult, and it simplifies the design and building. It’s possible that, during testing, we will discover that there is a better platform for this than the Raspberry Pi…the Intel Galileo, for example. Only testing will tell.

My hope is that this entire system, sensor clients and statistics server, will communicate between each other directly, and not over the wider Internet. There are advantages and disadvantages to this plan, but I feel like the benefits of not having to fight with IT issues regarding implementation of the system will make it worth it. This is the most tenuous portion of the overal plan, as there are a myriad of ways that it could be architected and choices made about wireless protocols. But this is the initial road down which I am heading vis a vis development, and if it is necessary at some point to curve with the road, I will let the community know.

Conclusion

We take patron privacy very seriously at Measure the Future. And we reject the notion that gathering useful statistics necessarily puts patron privacy in jeopardy. It is possible to both observe and collect information about patron browsing habits and activity inside the library without doing so in a way that is identifiable to an individual patron.

So that’s exactly what we plan to do.

Keep watching. And let me know what’s wrong with my plan.

EDIT: autoplaying video removed – use link below to see video.

A local Boise Fox affiliate did a story on Meridian and their involvement in the Measure the Future project!

While the report manages to hit the “omg sensors” point a few too many times, and they get the details wrong about how the information gathered is going to be shared…it’s still really awesome to see the project get some airtime. And it’s always a treat to see Gretchen being awesome. Enjoy!

ALA Midwinter 2015 Presentation

At ALA Midwinter 2015, the Knight Foundation gave a stage to the 8 News Challenge winners in order to introduce their projects to the world. Here’s the presentation I put together for Measure the Future. It’s also one of the few chances you have to see me speak while wearing a suit.

Over at Miskatonic University Press, William Denton writes up a good summary of concerns about the Measure the Future project and privacy. In that post, he says:

Measure the Future has all the best intentions and will use safe methods, but still, it vibes hinky, this idea of putting sensors all over the library to measure where people walk and talk and, who knows, where body temperature goes up or which study rooms are the loudest … and then that would get correlated with borrowing or card swipes at the gate … and knowing that the spy agencies can hack into anything unless the most extreme security measures are taken and there’s never a moment’s lapse … well, it makes me hope they’ll be in close collaboration with the Library Freedom Project.

A fine, fine point, and one that I felt was important enough that I actually made sure to include a slide in my announcement presentation (which will be going up here on the blog asap) about privacy and how I am very aware of the potential issues here. I have spent a decent amount of time thinking about the threat models for data of this type, and how to properly anonymize/aggregate the data collected by our sensors.

While we are still early on in the thinking about how best to collect the data we need, my best guess right now is that we will be using Machine Vision-based low-resolution image sensors to act as our “counters”. There are many, many ways to count people: laser tripwire sensors, infrared sensors, ultrasonic sensors, and more. But the one that gives us the most flexibility of placement, and handles two very tricky problems well (the multiple-body problem and being able to count directionality of movement) pretty well. Plus machine vision based sensors can get better faster via software updates than any of the other types.

Using these types of sensors, the data collected will be something like a timestamped interger along with a directional measure (into the space, out of the space). There won’t be any form of individualized tracking…we don’t need to know who the patron is, and we don’t care. Correlation with circulation data, which I do think will be incredibly valuable in order to see if browsing behaviors correlate with circulation, can be done without caring at all about patron information. All we need to know is if a book circulated (any book) in the range that was browsed (by anyone). It doesn’t matter for our purposes if it’s the same person doing both things, as we’re just going to be looking at correlation in large data sets.

Believe me, I understand how much this data could be abused. Not only do I plan to build measurement tools that respect patron privacy, I’m going to try and build tools whose data structures and management make it impossible for libraries to misuse the data at all. And I’m totally happy to work with The Library Freedom Project or anyone else that can help me make sure that our data is clean and free of concern.

Just a quick link roundup on media coverage of the announcement around the web:

- Measure the Future – a Knight Foundation Grant winner from Sparkfun Electronics

- Knight News Challenge: How Can Libraries Innovate? from PBS

- Knight News Challenge from Nieman Lab

- Knight Foundation ponies up $3M for projects that ‘leverage libraries as a platform’ from Venturebeat

- News Challenge announces winners from American Libraries Magazine

- Data-Driven Library Spaces – Fall 2017 LIS 654-03 on About

- Measure the Future project goes Public Beta | Pattern Recognition on Measure the Future Public Beta

- Measure the Future Public Beta – Measure the Future on Building a Beta Sensor

- Cynthia Porter on KIVI in Boise features Meridian Public Library and Measure the Future

- Steve N. on Development, Integration, and the Hard Work of Launching

Sorry. No data so far.